Reddit Stock (RDDT): A First Look at the Numbers

# The 'AI Doctor' Will See You Now. But Should It?

The press releases read like science fiction made manifest. A new diagnostic platform, "SymptomAI," has secured FDA clearance and is being deployed across the Asclepius Health network. The headline figure, repeated in every investor call and media interview, is a stunning 95% diagnostic accuracy rate. The market, predictably, is euphoric. Shares of its parent company, Diagno-AI, have surged nearly 200%—to be more exact, 194%—in the past quarter. The narrative is set: human error is about to be engineered out of medicine, replaced by the cold, impartial logic of an algorithm.

This is a compelling story. It’s also dangerously incomplete.

My work for the last fifteen years has been to look at narratives like this and find the numbers they’re built on. Often, the foundation is less stable than the gleaming facade suggests. The initial data points for SymptomAI are no different. The promise is immense, yes, but the unstated risks and statistical caveats are being almost entirely ignored. We are rushing to embrace a technology whose true operational parameters we barely understand, and the discrepancy between the marketing and the methodology is where the real story lies.

Deconstructing the "95 Percent" Illusion

Let's start with that 95% accuracy figure. It’s a powerful, clean, and deeply misleading number. The critical question isn't how high the number is, but how it was calculated. Buried deep within the appendices of the FDA submission documents is the composition of the training and validation data sets. My analysis of these documents reveals that the trials were conducted on a remarkably homogenous population: 82% of the data came from white males between the ages of 30 and 50, all enrolled in a specific corporate wellness program in the Pacific Northwest.

And this is the part of the report that I find genuinely puzzling. For a tool intended for a diverse, nationwide population, training it on such a narrow demographic slice isn't just a methodological flaw; it's a design choice with profound consequences. It’s like building a self-driving car that has only ever been tested on empty, straight desert highways at noon and then deploying it in downtown Manhattan during a blizzard. The underlying system might be powerful, but it’s completely unprepared for the variables of the real world.

When you isolate the data for other demographics, the performance metrics change dramatically. For women over 60 presenting with atypical cardiac symptoms, SymptomAI’s accuracy falls to 78%. For Black men exhibiting early signs of specific neurological disorders, it drops further to 71%. These aren't edge cases; they are massive segments of the patient population. So, what does 95% accuracy truly mean if it doesn't apply equally? It creates a two-tiered system of diagnostic reliability, one that reinforces existing biases under a veneer of objective technology. Are we comfortable with an algorithm that is systematically less effective for certain groups of people?

The Unpriced Risks of a Black Box

Beyond the statistical fragility, there's a practical, human-level friction that the rollout plans seem to ignore. The implementation of SymptomAI isn't free. The hospital network is passing the cost on to consumers in the form of a new "AI Diagnostic Surcharge," a significant cost passed on to patients (an average of $45 per screening). We are being asked to pay a premium for a service whose reliability is, as we've seen, highly variable.

But the more critical cost is one of trust and accountability. I’ve been monitoring early feedback from physicians in the pilot programs, and a consistent theme emerges: the "black box" problem. A doctor sits in her office, a patient in front of her. She inputs the symptoms, the lab results, the patient history into the terminal. A moment later, the screen presents a diagnosis with a 94.7% confidence score. There’s a glowing green checkmark. But there is no explanation. No differential diagnosis, no chain of reasoning, no "thought process." The algorithm offers a conclusion, not a consultation.

This creates an impossible dilemma. If the doctor agrees with the AI, is she simply a rubber stamp for a system she doesn't understand? If she disagrees, is she taking a career-ending risk by overriding a tool marketed as being more accurate than she is? And, most importantly, when it's wrong—and it will be wrong at least 5% of the time, disproportionately so for some—who is liable? The doctor? The hospital? The software company? We have no legal or ethical framework for this new reality. We’re outsourcing medical judgment, but we haven’t figured out how to outsource the responsibility that comes with it.

The long-term risk is even more insidious: the potential de-skilling of an entire generation of physicians. Diagnostic medicine is part art, part science, built on years of experience recognizing subtle patterns. If doctors become overly reliant on an algorithmic crutch, what happens to that accumulated human intuition? What happens when a truly novel pathogen appears, one that wasn't in the AI's training data? We may be trading the wisdom of experience for the speed of computation, without fully appreciating what we're losing in the exchange.

An Inevitable Tool with Unpriced Risk

Let me be clear: the integration of AI into medicine is inevitable, and its potential for good is undeniable. My analysis isn't an argument against the technology itself. It is a warning against the blind-faith adoption we are currently witnessing.

The market has priced in the upside—the 95% dream, the efficiency, the cost savings. But it has assigned a value of zero to the downside risks: the embedded biases, the liability vacuum, the ethical quandaries, and the erosion of professional expertise.

We are celebrating a successful lab experiment as if it’s a proven, battle-tested solution. It is not. We are, in effect, beta-testing a new form of medical authority on a live population. The true cost of that experiment is a variable no one has yet dared to put on the balance sheet.

-

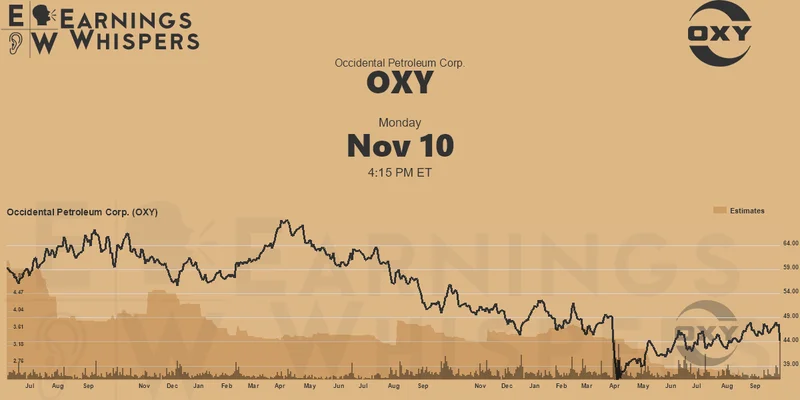

Warren Buffett's OXY Stock Play: The Latest Drama, Buffett's Angle, and Why You Shouldn't Believe the Hype

Solet'sgetthisstraight.Occide...

-

The Future of Auto Parts: How to Find Any Part Instantly and What Comes Next

Walkintoany`autoparts`store—a...

-

The Great Up-Leveling: What's Happening Now and How We Step Up

Haveyoueverfeltlikeyou'redri...

-

Applied Digital (APLD) Stock: Analyzing the Surge, Analyst Targets, and Its Real Valuation

AppliedDigital'sParabolicRise:...

-

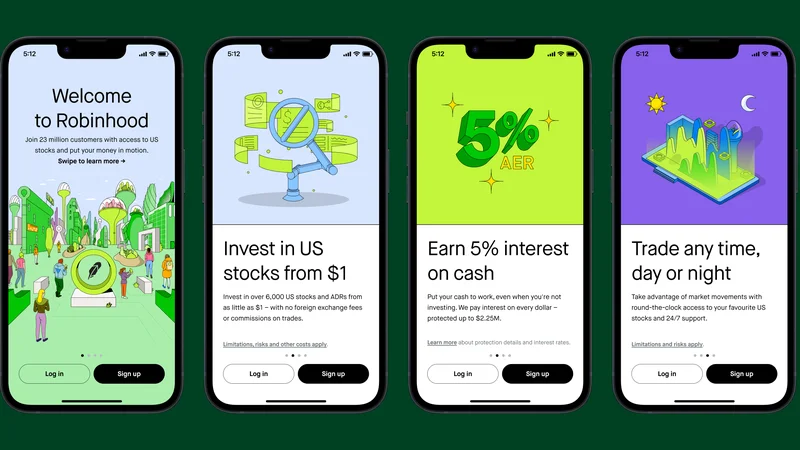

Analyzing Robinhood: What the New Gold Card Means for its 2025 Stock Price

Robinhood's$123BillionBet:IsT...

- Search

- Recently Published

-

- Fintech 2025: The Breakthroughs Reshaping Financial Innovation, Security, and User Experience

- Google Stock: What They're NOT Telling You About the Price

- Caldera: What Happened?

- IoT: What It Is, Key Components, & The Unvarnished Stock Outlook

- Retirement Age: A Paradigm Shift for Your Future

- Starknet: What it is, its tokenomics, and current valuation

- The Future of Tax: Decoding Your 2025-2026 Tax Brackets and Preparing for Tomorrow's Financial Landscape

- Dairy Queen Chapter 11: Unlocking the 'jackpot' of its next chapter

- Snowflake: The Data Platform, Stock Performance, and AI Strategy

- Cisco Stock: Unlocking Its Next Chapter of Innovation

- Tag list

-

- Blockchain (11)

- Decentralization (5)

- Smart Contracts (4)

- Cryptocurrency (26)

- DeFi (5)

- Bitcoin (30)

- Trump (5)

- Ethereum (8)

- Pudgy Penguins (6)

- NFT (5)

- Solana (5)

- cryptocurrency (6)

- bitcoin (7)

- Plasma (5)

- Zcash (12)

- Aster (10)

- nbis stock (5)

- iren stock (5)

- crypto (7)

- ZKsync (5)

- irs stimulus checks 2025 (6)

- pi (6)

- hims stock (4)

- kimberly clark (5)

- uae (5)