Shield AI's New AI Fighter Jet: The Tech and the Terrifying Reality

So, Shield AI just dropped a press release for its new killer drone, the "X-BAT," and the marketing department clearly worked overtime. They’re calling it the "holy grail of deterrence" and a "revolution in airpower." It takes off vertically, teams up with human pilots, and thinks for itself using some AI brain called "Hivemind." Sounds impressive, right? It's the kind of stuff you see in a slick, lens-flared promo video with a thumping electronic soundtrack.

Give me a break.

Every time one of these defense tech startups unveils a new toy, they talk like they’ve just solved world peace with an algorithm. “Airpower without runways,” co-founder Brandon Tseng says. It “buys diplomacy another day.” I’m sure it does. Nothing says "let's talk" like an autonomous hunter-killer drone that can launch from the deck of a ship 2,000 miles away. This isn't diplomacy; it's just a longer, more convenient stick.

They're selling us a future of sleek, self-piloting fighter jets that can think for themselves. It’s a compelling fantasy. No, 'fantasy' isn't the right word—it's a sales pitch. A very, very expensive sales pitch aimed at generals and politicians who love the idea of "affordable mass" and "attritable assets." Those are the buzzwords, by the way. "Attritable" is the sanitized, corporate-approved term for "we can afford to lose this thing." It means it's disposable. Which is a chilling way to talk about a machine designed to make life-or-death decisions.

Shield AI unveils X-BAT autonomous vertical takeoff fighter jet

Let’s be real about what’s being sold here. The X-BAT is designed to be a "collaborative combat aircraft," or CCA. A robot wingman. It promises to fly into dangerous places without a pilot, make tactical decisions without constant communication, and do it all cheaper than a traditional fighter jet. Shield AI says three of these things can fit in the space of one F-35. It’s the Costco model of air warfare: buy in bulk, save a bundle.

And they’re already expanding. Just days after this big reveal, they announced a team-up with South Korea's Hyundai Rotem to build more smart combat systems. The global conquest tour is already underway.

But here’s the part of the press release they leave in the fine print. This whole operation runs on the promise of AI. True, thinking AI. The kind that can adapt, learn, and execute complex missions on its own. They even point to the Air Force using their Hivemind software on an experimental F-16. See? It works! The Secretary of the Air Force even took a ride in it!

It's all very neat and tidy. It’s a perfect narrative. Except for one tiny, inconvenient problem: reality. The whole thing reminds me of when my building manager promised a "state-of-the-art smart laundry system." Now I just have an app that tells me a machine is broken after it ate my quarters. The promises are always shinier than the product.

AI drones in Ukraine — this is where we're at

While Silicon Valley defense bros are patting themselves on the back in press releases, there’s a real war happening in Ukraine where AI drones are actually being used. And guess what? It looks nothing like the commercials.

The grand vision of fully autonomous drones making complex decisions is, as one developer put it, a "holy grail" that remains "well out of reach." They're not even close. Andriy Chulyk, a Ukrainian drone engineer, put it best: "Tesla, for example, having enormous, colossal resources, has been working on self-driving for ten years and—unfortunately—they still haven’t made a product that a person can be sure of."

He’s right. We can't even trust a Tesla to not phantom brake on the highway, but we're supposed to be ready for an AI F-16 to dogfight on its own? It's absurd.

The "AI" everyone breathlessly talks about in Ukraine mostly boils down to one thing: "last-mile targeting." A human pilot flies the drone, picks a target on their screen, and the software keeps the drone locked on that cluster of pixels even if the signal cuts out. It’s basically the focus-tracking feature on a decent DSLR camera from ten years ago. It’s useful for getting around Russian jamming, offcourse, but it ain't Skynet. It's a glorified "point and click" adventure, and the drone is just following the last instruction it was given.

Even the more advanced stuff is messy. In one test, a fancy US-made V-BAT drone (a cousin to Shield AI's new toy) was used to spot a target for a smaller kamikaze drone. It worked, but only after the kamikaze drone got lost for 20 minutes because a little rain blurred its camera. It eventually found its way, but this is the reality they don't show you in the keynote presentation. What happens when it’s not rain, but smoke, fog, or a clever piece of camouflage?

The Billion-Dollar Question Nobody's Answering

Here’s the part that really gets me. All this talk of autonomy and AI studiously avoids the one question that actually matters: identification.

Kate Bondar, a senior fellow at CSIS, points out that the visual data from Ukrainian drones comes from cheap, analog cameras. The AI can tell the difference between a tank and a person, sure. But it can’t tell the difference between a Russian soldier and a Ukrainian one. Or, more terrifyingly, a soldier and a civilian. That problem, she says, "has not been solved."

So we're building fleets of "attritable" drones that can be sent on one-way missions, controlled by software that can’t reliably tell friend from foe, and we’re calling it progress. It's a technological leap of faith off a moral cliff. Are we really just supposed to nod along as defense contractors sell us on a future where algorithms make kill decisions, while the grunts on the ground are still struggling with software that gets confused by a shadow?

Maybe I'm just a cynic. Maybe I'm the one who doesn't get it. It’s possible that this time, the promises are real and the tech will work flawlessly and ethically and...

No. That's insane. The truth is what analysts like Bondar suspect: a lot of this is just marketing. "War's also a business," she said. "You have to sell something...To have AI-enabled software, or some cool AI system, that's something that sounds really cool and sexy."

And that’s what this is all about. Selling a cool, sexy, and terrifyingly half-baked vision of the future. A future where the cost of failure isn't a buggy app, but a crater where a school used to be.

So We're Just Doing This, Huh?

At the end of the day, all the slick videos and breathless press releases can't hide the simple, ugly truth. We are rushing to hand over lethal decision-making to machines that are, by their creators' own admission, not ready. It's not a "revolution in airpower." It's a reckless gamble with software that's still in beta, and we're all being forced to live inside the experiment.

-

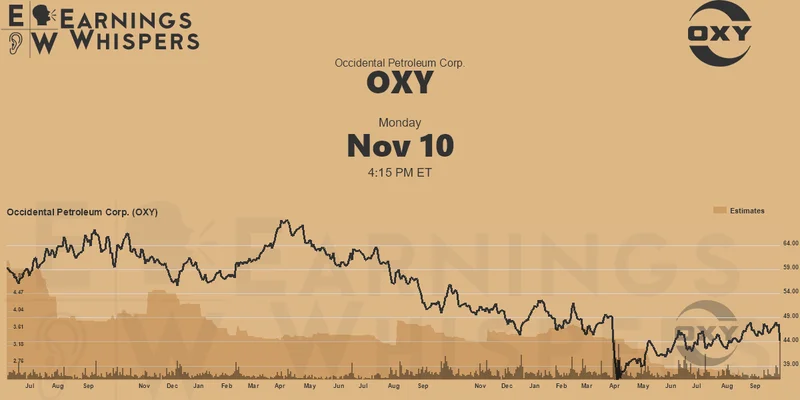

Warren Buffett's OXY Stock Play: The Latest Drama, Buffett's Angle, and Why You Shouldn't Believe the Hype

Solet'sgetthisstraight.Occide...

-

The Future of Auto Parts: How to Find Any Part Instantly and What Comes Next

Walkintoany`autoparts`store—a...

-

The Great Up-Leveling: What's Happening Now and How We Step Up

Haveyoueverfeltlikeyou'redri...

-

Applied Digital (APLD) Stock: Analyzing the Surge, Analyst Targets, and Its Real Valuation

AppliedDigital'sParabolicRise:...

-

Analyzing Robinhood: What the New Gold Card Means for its 2025 Stock Price

Robinhood's$123BillionBet:IsT...

- Search

- Recently Published

-

- Fintech 2025: The Breakthroughs Reshaping Financial Innovation, Security, and User Experience

- Google Stock: What They're NOT Telling You About the Price

- Caldera: What Happened?

- IoT: What It Is, Key Components, & The Unvarnished Stock Outlook

- Retirement Age: A Paradigm Shift for Your Future

- Starknet: What it is, its tokenomics, and current valuation

- The Future of Tax: Decoding Your 2025-2026 Tax Brackets and Preparing for Tomorrow's Financial Landscape

- Dairy Queen Chapter 11: Unlocking the 'jackpot' of its next chapter

- Snowflake: The Data Platform, Stock Performance, and AI Strategy

- Cisco Stock: Unlocking Its Next Chapter of Innovation

- Tag list

-

- Blockchain (11)

- Decentralization (5)

- Smart Contracts (4)

- Cryptocurrency (26)

- DeFi (5)

- Bitcoin (30)

- Trump (5)

- Ethereum (8)

- Pudgy Penguins (6)

- NFT (5)

- Solana (5)

- cryptocurrency (6)

- bitcoin (7)

- Plasma (5)

- Zcash (12)

- Aster (10)

- nbis stock (5)

- iren stock (5)

- crypto (7)

- ZKsync (5)

- irs stimulus checks 2025 (6)

- pi (6)

- hims stock (4)

- kimberly clark (5)

- uae (5)