beta technologies: what we know

The Ultimate Payoff? I'll Believe It When I See It

So, the buzz is all about "People Also Ask" and "Related Searches." Big deal. We're supposed to be impressed by the fact that algorithms can now regurgitate the questions we're already asking? Give me a break.

Search Engines: Asking the Real Questions?

Let's be real. "People Also Ask" is just a fancier version of autocomplete. It's not insightful; it's reactive. It's a mirror reflecting our collective, often misguided, curiosity.

And "Related Searches"? Please. That's just a thinly veiled attempt to keep us clicking, trapped in the echo chamber of our own biases. It's like the internet equivalent of those "customers who bought this item also bought" suggestions on Amazon, except instead of buying more crap, we're just consuming more of the same information.

I mean, are we really supposed to believe that these algorithms are some kind of oracle, divining the deepest desires of the human soul? Or are they just cleverly exploiting our inherent laziness and confirmation bias? I'm going with the latter.

It's not even about asking the right questions. It's about who is asking, why they're asking, and what agenda is being served. And let's be clear: the agenda is always, always profit.

The Algorithm's "Intelligence": A Joke

The problem isn't just the questions themselves; it's the illusion of intelligence that these features create. We're so easily impressed by shiny interfaces and complex algorithms that we forget to think critically about what's actually happening.

It's like we're all standing around, gawking at a magician pulling rabbits out of a hat, completely oblivious to the fact that the rabbits were already in there to begin with.

Where's the actual thinking? Where's the originality? Where's the challenge to the status quo? All I see is a glorified search bar dressed up in fancy clothes.

And don't even get me started on the potential for manipulation. These algorithms aren't neutral arbiters of truth; they're tools that can be weaponized to spread misinformation, amplify biases, and shape public opinion.

Then again, maybe I'm the crazy one here. Maybe I'm just a grumpy old man yelling at the cloud. But I can't shake the feeling that we're being played.

The Human Element: Still Missing

What's missing is the human element. The nuance, the empathy, the ability to understand the unspoken. Algorithms can process data, but they can't understand context. They can identify patterns, but they can't grasp the underlying motivations.

It's like trying to understand a painting by analyzing its chemical composition. You might learn something about the pigments and the canvas, but you'll miss the soul of the artwork.

We need to be asking ourselves: Are these "smart" features actually making us smarter, or are they just making us more passive consumers of information? Are we becoming more informed, or just more easily manipulated?

Is This the Best We Can Do?

-

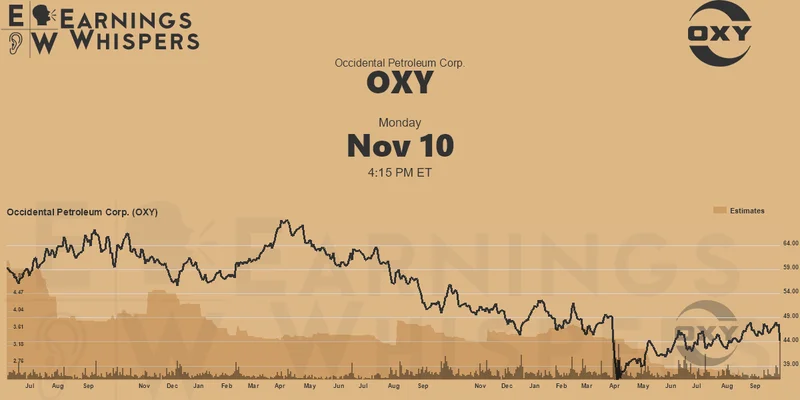

Warren Buffett's OXY Stock Play: The Latest Drama, Buffett's Angle, and Why You Shouldn't Believe the Hype

Solet'sgetthisstraight.Occide...

-

The Future of Auto Parts: How to Find Any Part Instantly and What Comes Next

Walkintoany`autoparts`store—a...

-

The Great Up-Leveling: What's Happening Now and How We Step Up

Haveyoueverfeltlikeyou'redri...

-

Applied Digital (APLD) Stock: Analyzing the Surge, Analyst Targets, and Its Real Valuation

AppliedDigital'sParabolicRise:...

-

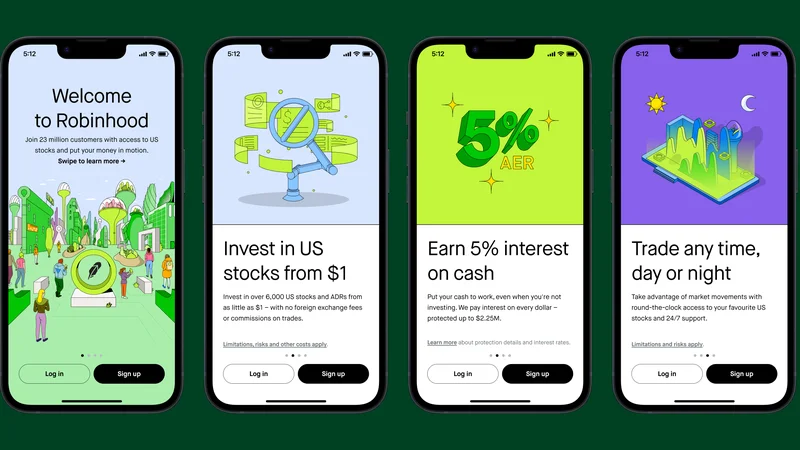

Analyzing Robinhood: What the New Gold Card Means for its 2025 Stock Price

Robinhood's$123BillionBet:IsT...

- Search

- Recently Published

-

- Fintech 2025: The Breakthroughs Reshaping Financial Innovation, Security, and User Experience

- Google Stock: What They're NOT Telling You About the Price

- Caldera: What Happened?

- IoT: What It Is, Key Components, & The Unvarnished Stock Outlook

- Retirement Age: A Paradigm Shift for Your Future

- Starknet: What it is, its tokenomics, and current valuation

- The Future of Tax: Decoding Your 2025-2026 Tax Brackets and Preparing for Tomorrow's Financial Landscape

- Dairy Queen Chapter 11: Unlocking the 'jackpot' of its next chapter

- Snowflake: The Data Platform, Stock Performance, and AI Strategy

- Cisco Stock: Unlocking Its Next Chapter of Innovation

- Tag list

-

- Blockchain (11)

- Decentralization (5)

- Smart Contracts (4)

- Cryptocurrency (26)

- DeFi (5)

- Bitcoin (30)

- Trump (5)

- Ethereum (8)

- Pudgy Penguins (6)

- NFT (5)

- Solana (5)

- cryptocurrency (6)

- bitcoin (7)

- Plasma (5)

- Zcash (12)

- Aster (10)

- nbis stock (5)

- iren stock (5)

- crypto (7)

- ZKsync (5)

- irs stimulus checks 2025 (6)

- pi (6)

- hims stock (4)

- kimberly clark (5)

- uae (5)