Bitcoin: What's Driving the Volatility?

The Algorithmic Echo Chamber: Are "People Also Ask" Questions Really From People?

The "People Also Ask" (PAA) section—that ever-present box of questions that Google serves up alongside search results—is supposedly a reflection of collective curiosity. Type in "electric vehicle range," and you're presented with a neat little list: "What is the range of most electric cars?", "How accurate are electric car range estimates?", and so on. But lately, I've been wondering: are these questions actually driven by organic human curiosity, or is something else at play?

It’s easy to assume that Google’s algorithm is simply surfacing the most frequently asked questions related to your search term. Makes sense, right? Except, the rabbit hole goes deeper. These questions aren’t just FAQs; they’re often subtly framed, sometimes even leading the user down a specific path of inquiry. It's not a neutral reflection; it's a curated narrative. (And let's be honest, Google's never been shy about curating narratives.)

The Feedback Loop

The problem is that the PAA section creates a feedback loop. Google presents a question, people click on it, Google registers the click as validation, and the question gets shown to more people. It's a self-fulfilling prophecy. This is similar to how algorithmic trading can amplify market trends, regardless of underlying value. The algorithm isn't necessarily reflecting true demand; it's creating it.

Consider a search for "best cloud storage." The PAA section might include questions like "Is Google Drive the most secure cloud storage?". Now, Google Drive might be a perfectly fine service, but the inclusion of that specific question subtly promotes it. Other, potentially better, options might be overlooked simply because they aren't part of the algorithmic echo chamber.

And this is the part of the analysis that I find genuinely troubling. It’s not about whether Google Drive is good or bad. It’s about the potential for these algorithms to shape public perception in ways that are difficult to detect. We think we’re seeing the collective wisdom of the crowd, but we might just be seeing the amplified voice of an algorithmically constructed crowd.

Related Searches: Another Piece of the Puzzle

Then there are the "Related Searches" at the bottom of the results page. These are supposed to offer alternative or more specific search terms. But again, the selection feels… deliberate. Are these truly the most common related searches, or the searches that Google wants you to make?

Here's where we need more transparency. How does Google determine what makes it into the PAA and Related Searches sections? What data do they use? What biases, if any, are built into the algorithms? Details on the algorithm's inner workings remain scarce, but the impact is clear.

I've looked at hundreds of these search results pages, and the degree of overlap between PAA and Related Searches is noteworthy. It suggests a coordinated effort to guide the user's search journey. It's like being in a conversation where someone keeps subtly steering you back to a pre-approved topic.

The Illusion of Choice

The real danger here isn't that Google is intentionally trying to deceive us (though that's always a possibility). It's that we're being lulled into a false sense of security. We think we're making informed decisions based on a wide range of information, but we're actually operating within a carefully constructed information bubble.

Think of it like this: Imagine you're trying to decide what restaurant to go to. You ask your friends for recommendations, but unbeknownst to you, all your friends have been paid by one particular restaurant to promote it. You think you're getting unbiased advice, but you're actually being subjected to a very subtle form of advertising.

The implications are far-reaching. If search engines are shaping our perceptions, they're also shaping our decisions. From what products we buy to what political opinions we hold, these seemingly innocuous algorithms could be having a profound impact on society.

Are We Asking the Right Questions?

It's time we started asking tougher questions about how these algorithms work. We need more transparency, more accountability, and more critical thinking. We need to recognize that the "People Also Ask" section isn't necessarily a reflection of what people actually want to know, but rather a reflection of what the algorithm thinks they should want to know. And that's a crucial distinction.

The Algorithm's Invisible Hand

-

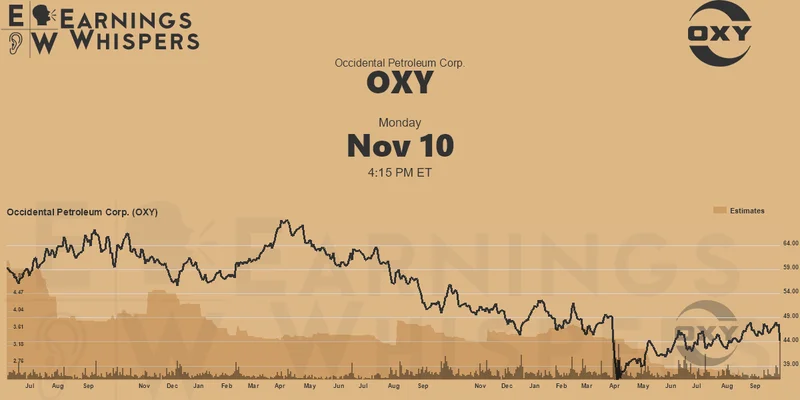

Warren Buffett's OXY Stock Play: The Latest Drama, Buffett's Angle, and Why You Shouldn't Believe the Hype

Solet'sgetthisstraight.Occide...

-

The Future of Auto Parts: How to Find Any Part Instantly and What Comes Next

Walkintoany`autoparts`store—a...

-

The Great Up-Leveling: What's Happening Now and How We Step Up

Haveyoueverfeltlikeyou'redri...

-

Applied Digital (APLD) Stock: Analyzing the Surge, Analyst Targets, and Its Real Valuation

AppliedDigital'sParabolicRise:...

-

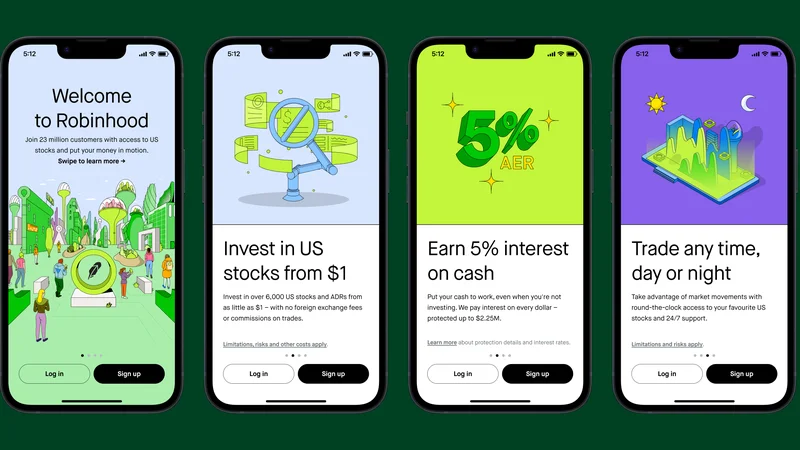

Analyzing Robinhood: What the New Gold Card Means for its 2025 Stock Price

Robinhood's$123BillionBet:IsT...

- Search

- Recently Published

-

- Fintech 2025: The Breakthroughs Reshaping Financial Innovation, Security, and User Experience

- Google Stock: What They're NOT Telling You About the Price

- Caldera: What Happened?

- IoT: What It Is, Key Components, & The Unvarnished Stock Outlook

- Retirement Age: A Paradigm Shift for Your Future

- Starknet: What it is, its tokenomics, and current valuation

- The Future of Tax: Decoding Your 2025-2026 Tax Brackets and Preparing for Tomorrow's Financial Landscape

- Dairy Queen Chapter 11: Unlocking the 'jackpot' of its next chapter

- Snowflake: The Data Platform, Stock Performance, and AI Strategy

- Cisco Stock: Unlocking Its Next Chapter of Innovation

- Tag list

-

- Blockchain (11)

- Decentralization (5)

- Smart Contracts (4)

- Cryptocurrency (26)

- DeFi (5)

- Bitcoin (30)

- Trump (5)

- Ethereum (8)

- Pudgy Penguins (6)

- NFT (5)

- Solana (5)

- cryptocurrency (6)

- bitcoin (7)

- Plasma (5)

- Zcash (12)

- Aster (10)

- nbis stock (5)

- iren stock (5)

- crypto (7)

- ZKsync (5)

- irs stimulus checks 2025 (6)

- pi (6)

- hims stock (4)

- kimberly clark (5)

- uae (5)